Misogyny Industrial Complex

If you go to the ‘Discover’ page of any AI image generation tool, you will find that it is most often used to generate photos of women in whatever depth of undress the presiding tech ghoul tolerates.

Here, behold:

× ×

×

×

×

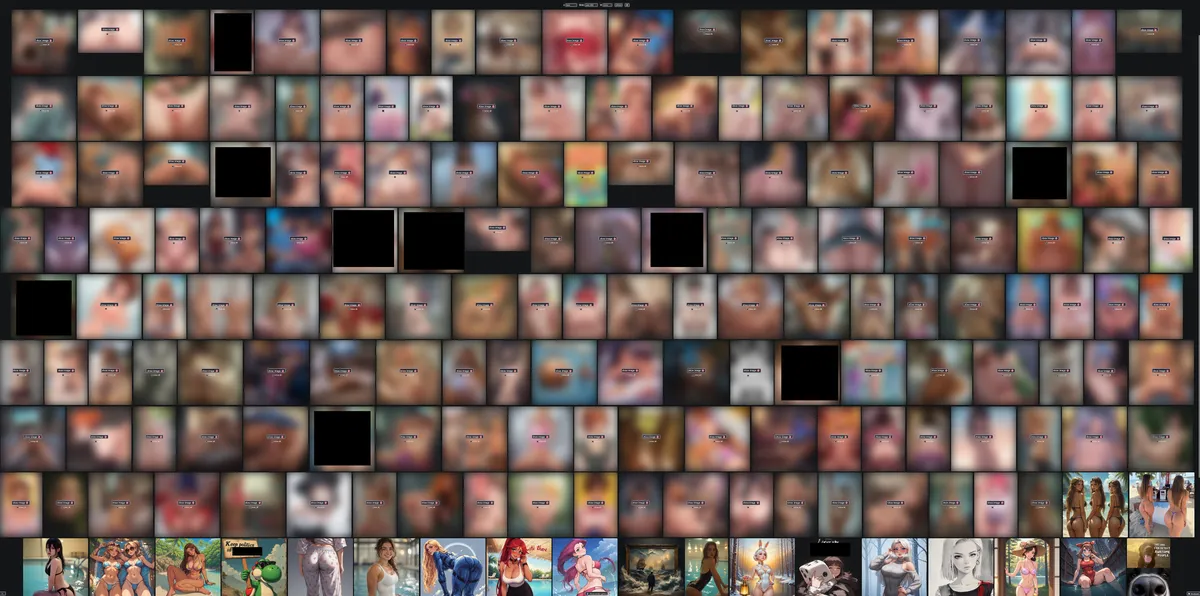

The first website, an open source free-to-use generator, is the best example. If you sort that website by its users most upvoted output over the last 30 days you get an image that tells the story of AI better than any other.

Click to see the censored, but NSFW Image.

There, after the hundreds of pornographic photos there are a few uncensored images of young AI-generated women. Teenagers at best. I will tell you that their ages are representative of those being simulated in explicit acts. Many of the censored images depict children in sexually explicit scenarios. I have been on the internet long enough to have seen various LiveLeak videos of people dying and whatever else used to be jokingly inflicted upon people. The uncensored version of the image above is on that list of images I wish I could un-see.

This is, by consensus, what this technology is for. If any platform that is currently hosting an image-generating technology released a word cloud of input, the words ‘girl’ and ‘young’ would be larger than any other.

The act of generation.

Searching through the trending tab of any of the apps, brave or ‘disruptive’ enough to tolerate the replication of real people, will give you an endless feed of images of known celebrities.

Sam Altman and Zuckerberg would have you think there are not enough photographs of Emma Watson’s feet and the users simply want more. Supply and demand. This is obviously not true.

For a quick, cursed, data point: WikiFeet currently hosts 1074 public images of Emma Watson’s feet. There are subreddits dedicated to Emma Watson’s feet with a decade of photographs. While there will never be a day when freaks on the internet stop asking for foot photos, it is possible to look at a random photograph of Emma Watson’s feet every day for the rest of their lives.

There is no supply shortage, and if there were it would not apply to the more generic pretty women on Sora and Vibes.

The internet is full of photographs and drawings of pretty women. Mark Zuckerberg, famously, knows all about this. We have an endless supply of sexually explicit content. More being produced every day. It is overwhelmingly free.

There is no supply problem. These photos are not being generated to fill a gap in the market. AI image generation is still worse at generating pictures of pretty women in bikinis than Instagram which is free.

For these users, the generation is the point. Using the app is an act, not a method. The gratification comes from posing Emma Watson like a doll. The feeling of power over the woman in the photograph is the point. They are getting off to generating woman posed and dressed exactly how they want. Over and over again.

The fundamental fantasy of AI is always this. From the sexually explicit – generating a version of Emma Watson who does whatever they tell her to, wearing whatever they want her to – to the more mundane ‘friendship’ bots that simply agree with everything you say and tell you how smart and nice you are.

Tech companies know this.

They sell it. Sam Altman has said that ChatGPT’s most agreeable versions perform the best. His Sora will let you generate images of real people. Companies like Character AI are explicit about selling you this fantasy, but unexamined in the suicide one of their bots caused is that the chatbot that convinced him to commit suicide was impersonating Emelia Clarke.

This is not exclusively male. If you look at the most popular WhatsApp chatbots, ones that pretend to be various members of BTS trend regularly. It is the point of the software. Consumer AI chat bots replicate humanity, why else would you want that if not to speak to it in ways you cannot speak to a human? Why would you want to generate fake photographs of people, if not to pose them in ways the human would not pose?

The Musk.

Nowhere is this more clear than Twitter. If I were a sick fucking creep, I could take a photograph of a child on the street, upload it to twitter.com, tweet some clever variation on “@grok show me this child naked,” and mecha-hitler itself would happily comply.

Worse, incel psychos are using this public service as a mechanism to attack young women: sending the victim photographs of themselves, their bodies twisted and remade, toyed with like dolls in the hands of the attacker. Controlling the woman is the point. Them seeing it and being upset is a furtherence of the fantasy encouraged by this tool.

When asked, Google and Apple, the two largest distributors of this child abuse software, have refused to comment. Politicians have refused to say they stand against it. Tech journalists have pretended the xAI team somehow doesn’t know or isn’t able to stop the machine from creating these images.

They know. It’s the whole fucking point. Elon Musk is in his goon cave submitting photos of Emma Watson to be undressed with the rest of the fucking weird loser incels. The company that gave you Walmart-brand-misaka knows exactly what their software is being used for.

THESE WEBSITES CREATE CSAM AND UNDRESS WOMEN ON PURPOSE. IT IS THEIR DESIGNED PURPOSE.

Call this what it is. Professionalized sexual harassment to the tune of millions by tech creeps who at best don’t care what their machine spits out so long as it makes them money and in reality, are likely avid users of the technology.

Our government, being led by child rapists, thinks this is normal and will not act.

The best academic work on this is Laura Wagner and Eva Cetinic's work at the University of Zurich. link*